Category: Education

-

December 8: Horace

Yesterday, we described briefly how Cicero’s philosophy, rhetoric, and insight into human nature influenced Martianus Capella, Augustine, and Petrarch. Today, we look at another Roman author, Quintus Horatius Flaccus, born December 8, 65 B. C, the Latin lyric poet who was Cicero’s and Vergil’s contemporary during the upheaval that ended the Roman Republic and began…

-

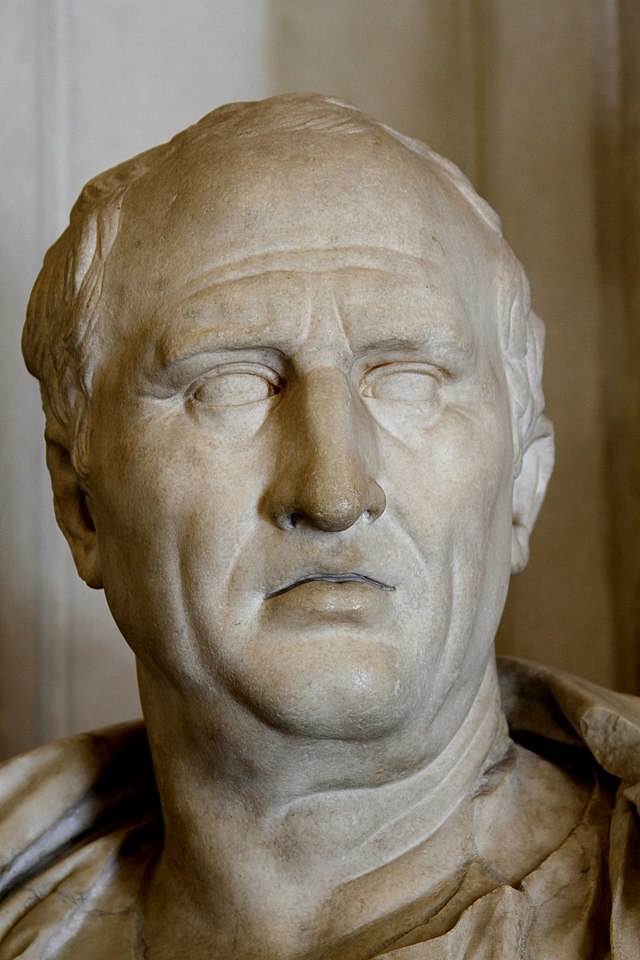

December 7: Cicero

On December 7, 43 B. C., Marcus Tullius Ciero died at Formia, Italy. He lived in the chaotic period that included the end of the Roman Republic and the beginning of the Roman Empire, and it is impossible to assess how thoroughly the observations and descriptions in his speeches, dialogues, treatises, and letters shaped western…

-

December 5: Clement of Alexandria

December 5 is the commemoration in some traditions of Clement of Alexandria (ca. A. D. 150-215). Clement is the earliest proponent of classsical Christian education that we know much about. The son of apparently well-to-do pagan parents and born in Athens or possibly Alexandria, he was educated in Greek philosophy and literature as a youth…