—

by

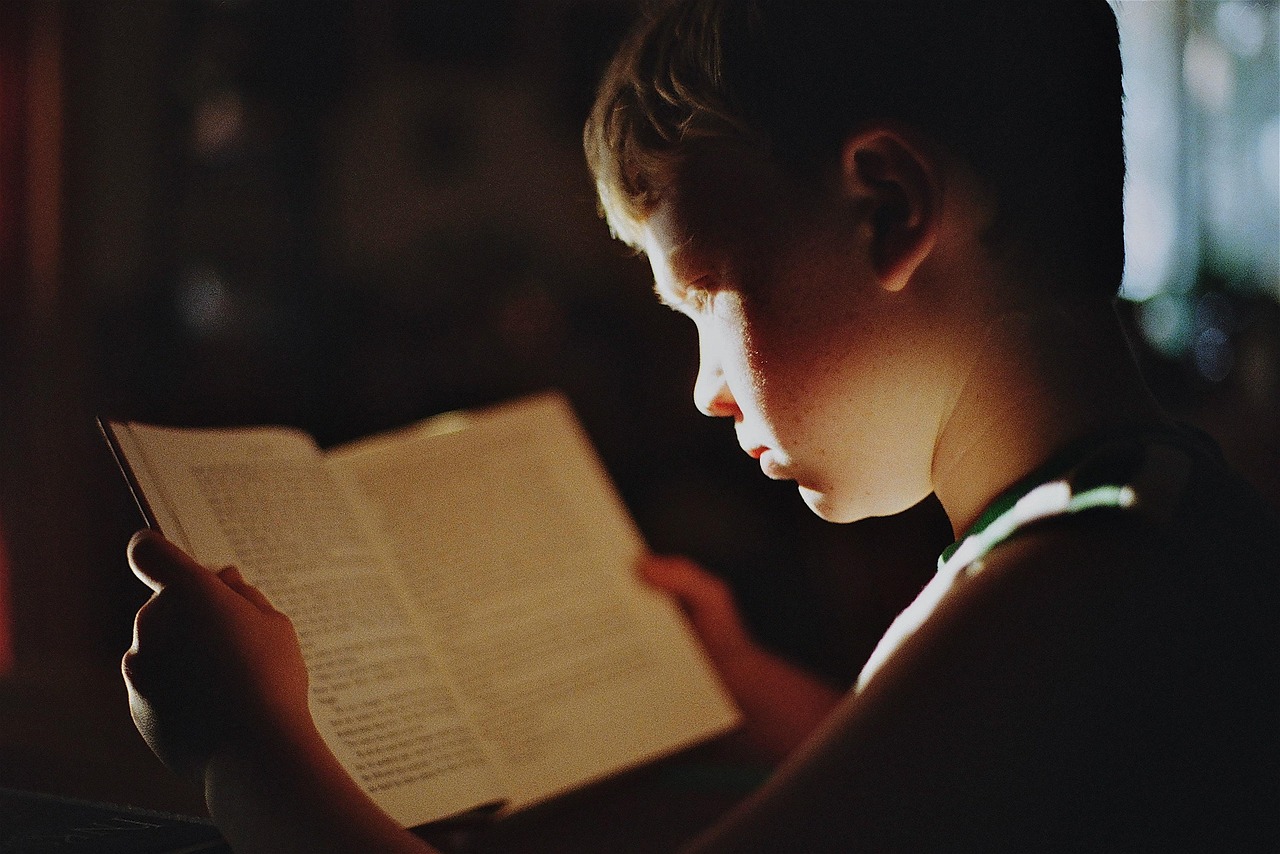

The November 2024 issue of The Atlantic contains an article (“The Elite College Students who Can’t Read Books”) that has been raising eyebrows and ire since. In it, Rose Horowitch notes that academics at some prestigious universities have concluded that few incoming…

The traditional school year in the United States and much of the rest of the world has been faulted for inefficiency: surely we could get more learning done, or do the same amount in less time, by simply continuing throughout…

One of the things about watching movies or TV shows multiple times is that certain phrases stick: they come back to you, triggered by odd connections your brain makes between the quotation and current experience. So today, looking through the…

On December 16, 1497, the Portuguese explorer Vasco da Gama reached the mount of the Great Fish River on the eastern side of the Cape of Good Hope in South Africa. Thus far had his predecessor Bartolomeu Dias come in…

I like bridges. When I was in grammar school, there was a pedestrian footbridge over the flood control channel that ran along the playground boundary. There were students who lived “beyond the wash” and got to use the bridge every…

Very early in the morning, before sunrise certainly, because it was pitch dark, on December 13 of my freshman year at college, someone banged loudly on my door. Stumbling out of bed, unable to find my glasses, and assuming there…

On December 12, 1966, Columbia Pictures released a movie based on a long-running British play about the Lord Chancellor of England under Henry VIII, a gentleman named Thomas More. While there is plenty to learn about the historical More as…

There are days when fatigue sets in early as the repetitive nature of certain tasks drains away any enthusiasm I might have had for studying or teaching or experimenting with new ways to present ideas or organize information. On the…

In November of 1684, Edmund Halley received a letter from Isaac Newton in answer to a question he had asked earlier that year. The question (based on Halley’s account some years later) asked for a description of the “Curve that…

One of the lesser known saints on the Roman Catholic calendar has his feast today: Peter Fourier. Fourier did not hold a high episcopal office, nor was he a prolific writer; he attracted my attention in the list of events,…

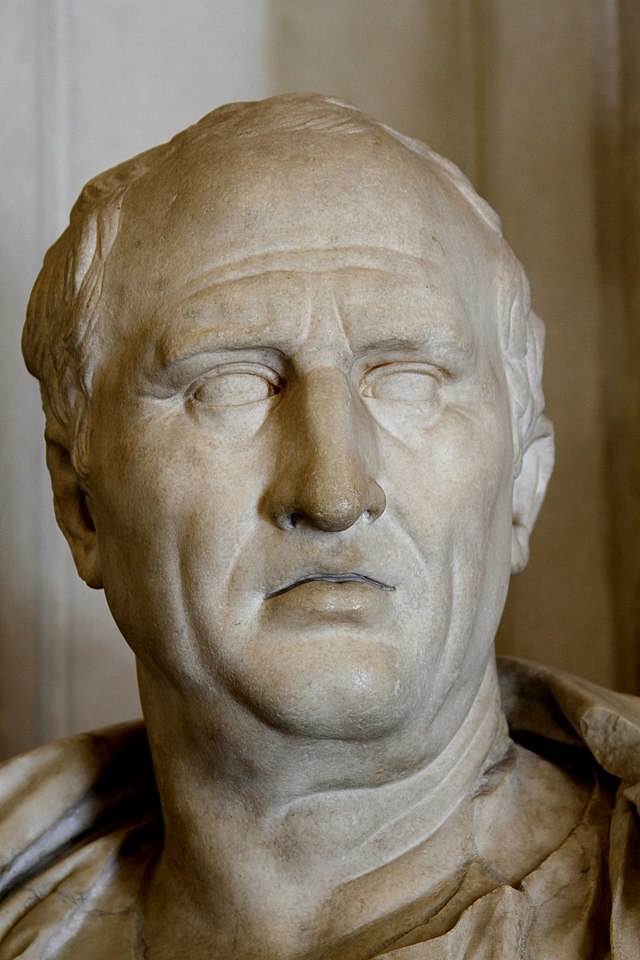

Yesterday, we described briefly how Cicero’s philosophy, rhetoric, and insight into human nature influenced Martianus Capella, Augustine, and Petrarch. Today, we look at another Roman author, Quintus Horatius Flaccus, born December 8, 65 B. C, the Latin lyric poet who…

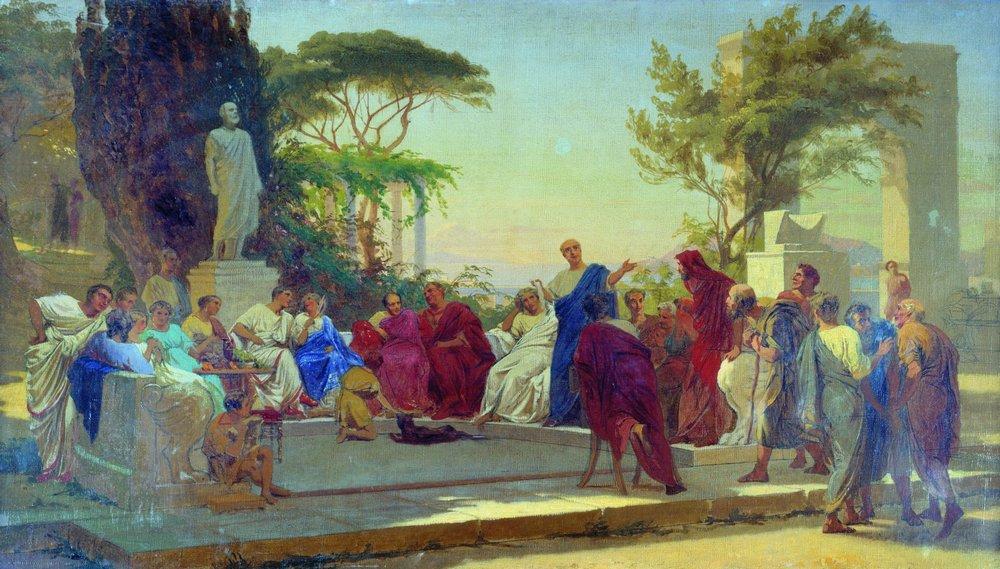

On December 7, 43 B. C., Marcus Tullius Ciero died at Formia, Italy. He lived in the chaotic period that included the end of the Roman Republic and the beginning of the Roman Empire, and it is impossible to assess…